I keep hearing the same story from senior leaders. Their businesses are running AI pilots, often quite a few of them, but nothing amounts to anything large. The pilots work in isolation, the teams move on to the next one, and none of them ever get to a point where they scale. The business case for going further never quite comes together.

This isn't a technology problem. It's an operating model problem.

The pilot trap

What often seems to happen is that a company picks a safe use case, something like document processing or customer service triage. They build a proof of concept with a small team, often with a vendor partner. It works well enough in a controlled environment. Everyone agrees it's promising.

Then comes the question: "How do we roll this out across the organisation?"

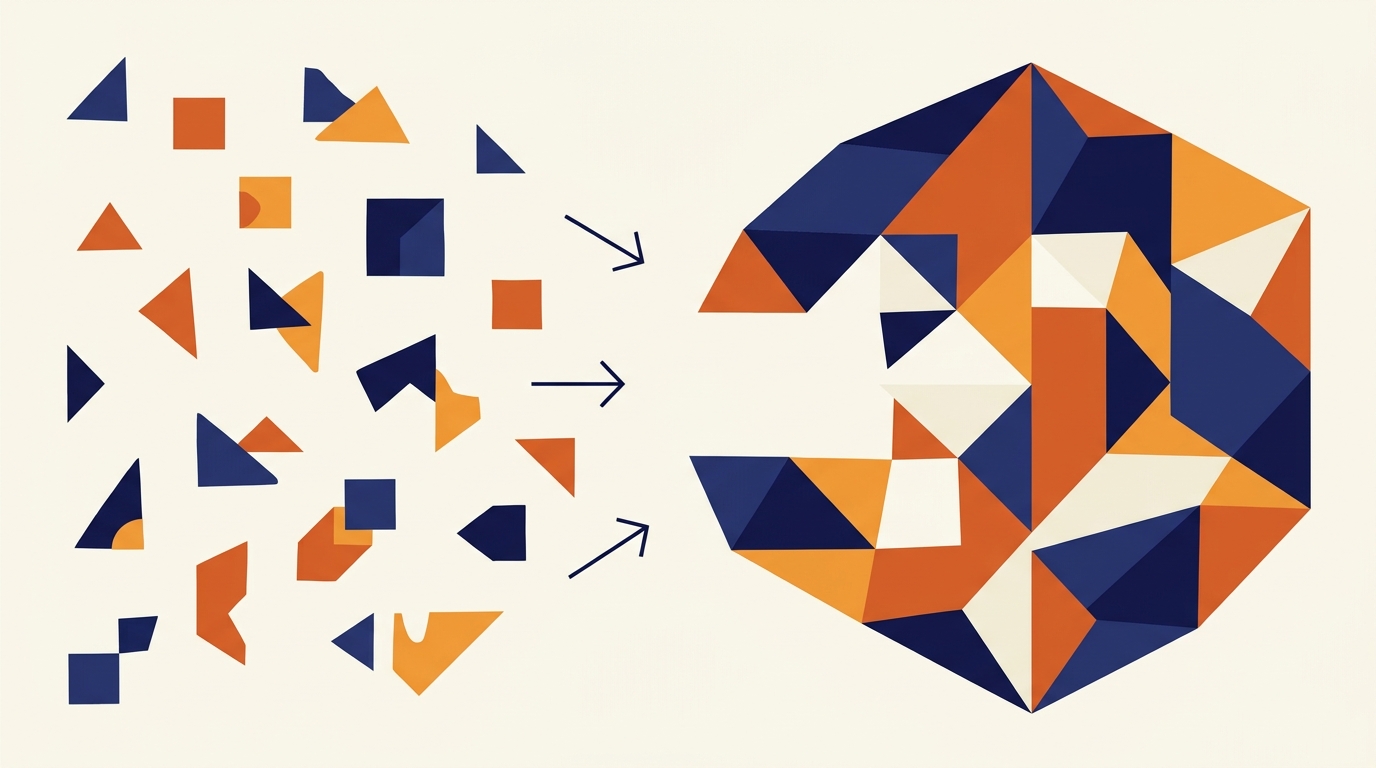

That is where the whole thing stalls. Scaling AI isn't about replicating a pilot. It is about rewiring how the organisation makes decisions, allocates resources, and measures success. The pilot proved the technology works - good. But it didn't prove the organisation was ready for it.

Three gaps that kill scale

Across transformation programmes, I see three gaps that consistently prevent pilots from becoming enterprise capabilities.

1. The governance gap

Most pilots run outside normal governance. They may have some form of executive air cover, a dedicated budget, and a small team with unusual autonomy. That may be why they succeed, however also why they can't scale. The moment you try to move from one team to twenty, you hit procurement processes, data governance frameworks, and compliance reviews that were never designed for AI workloads.

Consider properly how to build AI governance into your existing frameworks from day one, not as a bolt-on after the pilot succeeds.

2. The talent gap

A pilot needs about three to five people who understand both the technology and the business problem. Scaling needs that capability embedded across multiple teams. Most organisations don't have a plan for this. They assume the pilot team will somehow replicate itself. Invest in capability building alongside the pilot.

3. The measurement gap

Pilots typically get measured on technical metrics: accuracy, speed, cost of the tool itself. Enterprise value requires business metrics: revenue impact, customer satisfaction, time to decision. When you can't connect the AI output to a business outcome, it gets challenging to defend an investment into the scale-up.

Define business KPIs before the pilot starts. Measure what matters to the P&L, not what matters to the engineering team.

The operating model shift

Organisations that successfully scale AI treat it not as a project but as a capability. That means changes to three things:

1. Structure. A central AI function that sets standards, combined with embedded teams that apply those standards to specific business problems.

2. Process. A repeatable pathway from idea to pilot to scale, with clear stage gates and business ownership at each step.

3. Culture. Leaders who understand that AI changes how work gets done, not just what tools people use.

This isn't glamorous work. It's operating model design, change management, and governance. But it's the work that turns a successful pilot into enterprise-wide value.

What this means for you

If you're sitting on a successful pilot that hasn't scaled, ask yourself three questions:

Do I have governance that supports AI at enterprise scale, or does the pilot only work because it bypasses normal processes?

Do I have the talent to embed AI capability across multiple teams, or am I relying on a small group of specialists?

Am I measuring business outcomes, or just technical performance?

If the answer to any of these is no, the gap isn't in technology. It's in the operating model. And operating models are something you can fix.