Lloyds Banking Group began piloting an AI "satnav" for investments through Scottish Widows in April 2026. Savvy Wealth launched an agentic AI platform for independent financial advisers in the same month. The wealth management industry is moving from AI as a back-office tool to AI as a participant in the advice process. The design choices made now will determine whether these systems survive regulatory scrutiny.

The FCA has not published specific rules for AI in advice. It does not need to. The existing framework, Consumer Duty, SMCR, suitability requirements under MiFID II (as retained in UK law), and the Data (Use and Access) Act 2025, already sets the standard. An AI agent that participates in generating investment recommendations must meet the same suitability obligations as a human adviser. The question is how to design the system so that it does.

The Suitability Standard for AI

Suitability in wealth management has three components: the recommendation must be suitable for the client's investment objectives, the client must be able to bear the financial risks consistent with their objectives, and the client must have the knowledge and experience to understand the risks involved.

For a human adviser, meeting this standard involves a conversation, a fact-find, professional judgement, and documentation. For an AI agent, it requires the same information, the same analysis, and the same documentation, but the mechanism is different. The agent must gather complete and accurate client data, apply it to a suitability framework, generate a recommendation that meets the standard, and produce documentation sufficient for a regulatory review.

The point of failure in most AI suitability systems is not the recommendation algorithm. It is the data gathering. An agent that generates a technically sound portfolio recommendation based on incomplete or inaccurate client data has produced an unsuitable recommendation, regardless of the quality of the algorithm.

Design Pattern 1: Structured Data Gathering with Completeness Checks

The first design requirement is that the agent cannot proceed to recommendation without a complete suitability dataset. This sounds obvious. In practice, it is the most common point of failure.

A human adviser intuitively knows when they do not have enough information. They ask follow-up questions. They probe inconsistencies. They recognise when a client's stated risk appetite contradicts their financial circumstances. An AI agent must be designed to do the same, but explicitly.

The most common failure I see in firms building AI suitability systems is not a flawed algorithm. It is a data gathering process that accepts partial information and proceeds anyway. One firm I worked with had built a technically impressive recommendation engine, but in testing, over 40% of the suitability assessments were generated with incomplete risk capacity data because the agent did not enforce field completion. The fix was straightforward, but it required redesigning the conversation flow from scratch.

The pattern that works:

Mandatory field completion. The agent defines a minimum dataset required for suitability assessment. This includes investment objectives (growth, income, preservation), time horizon, risk tolerance (both attitudinal and capacity), existing assets and liabilities, income and expenditure, tax status, and knowledge and experience with relevant investment types. The agent cannot generate a recommendation until all fields are populated.

Consistency validation. The agent checks for contradictions in the client data. A client who states a high risk tolerance but has no emergency fund and a short time horizon presents a consistency problem. The agent must flag this and seek clarification rather than accepting the data at face value.

Dynamic follow-up. When a client's answers are ambiguous or incomplete, the agent generates targeted follow-up questions. "You mentioned you are comfortable with investment risk. Can you tell me how you would respond if your portfolio fell by 20% in a single quarter?" This is not a script. It is a conditional response to the specific gaps in the client's data.

Audit trail from first interaction. Every question asked, every answer received, and every follow-up triggered must be logged with timestamps. The suitability assessment begins at first contact, not at the point of recommendation.

Design Pattern 2: Bounded Recommendation Space

The second design requirement is that the agent operates within a defined recommendation space that is pre-approved by the firm's compliance function.

An unconstrained AI agent could, in theory, recommend any investment product available in the market. This is a regulatory risk. The firm must be able to demonstrate that the products recommended by the agent are ones the firm has assessed, approved, and can support. The recommendation space is the set of products, portfolio models, and asset allocations that the agent is authorised to recommend.

The pattern that works:

Pre-approved product universe. The agent can only recommend products from the firm's approved list. If a client's needs fall outside the available product set, the agent escalates to a human adviser rather than extending its recommendation space.

Risk-return boundaries. For each client risk profile, the agent has defined boundaries for asset allocation, concentration limits, and liquidity requirements. The agent cannot recommend a portfolio that breaches these boundaries, regardless of the model's output.

Suitability scoring with threshold enforcement. The agent generates a suitability score for each recommendation, based on the alignment between the client's profile and the recommended portfolio. Recommendations below a defined threshold are blocked and escalated. Recommendations in a borderline zone require human review. Only recommendations above the threshold are delivered directly.

Design Pattern 3: Meaningful Human Intervention

The Data (Use and Access) Act 2025, effective from February 2026, requires that where a decision is based solely on automated processing and has a significant effect on an individual, the individual has the right to meaningful human intervention. A human merely rubber-stamping a machine's output does not meet this threshold.

For wealth management, this means:

Tiered review based on recommendation risk. Low-risk, routine recommendations (rebalancing within existing parameters, tax-wrapper optimisation within established limits) can be processed with lighter human oversight, reviewed in batches with exception flagging. High-risk recommendations (significant asset allocation changes, new product types, drawdown strategies) require individual human review before delivery.

Reviewer competence. The human reviewer must be qualified to assess the recommendation's suitability. A compliance administrator checking boxes is not meaningful human intervention. A qualified adviser reviewing the recommendation against the client's profile and applying professional judgement is.

Override documentation. When a human reviewer modifies or rejects an AI recommendation, the reasoning must be documented. This creates a feedback loop: patterns of human override indicate areas where the AI's suitability model needs recalibration.

Design Pattern 4: Outcome Monitoring and Feedback

A suitability assessment is not a one-time event. A recommendation that was suitable at the point of sale can become unsuitable as the client's circumstances change. An AI system that continues to manage a client's portfolio must monitor for suitability drift.

Life event detection. The agent monitors for signals that a client's circumstances have changed: large deposits or withdrawals, changes in regular contributions, age milestones, communications indicating changed circumstances. Each signal triggers a suitability reassessment.

Outcome tracking against objectives. Is the portfolio delivering outcomes consistent with the client's stated objectives? A client who wanted capital preservation and has experienced a 15% drawdown needs a review, even if the drawdown is within the risk parameters the client originally accepted.

Systematic suitability review. On a defined schedule (annual, or triggered by market events), the agent reassesses the suitability of each client's current portfolio against their current profile. Changes in the client's circumstances, changes in market conditions, and changes in the product set all feed into this review.

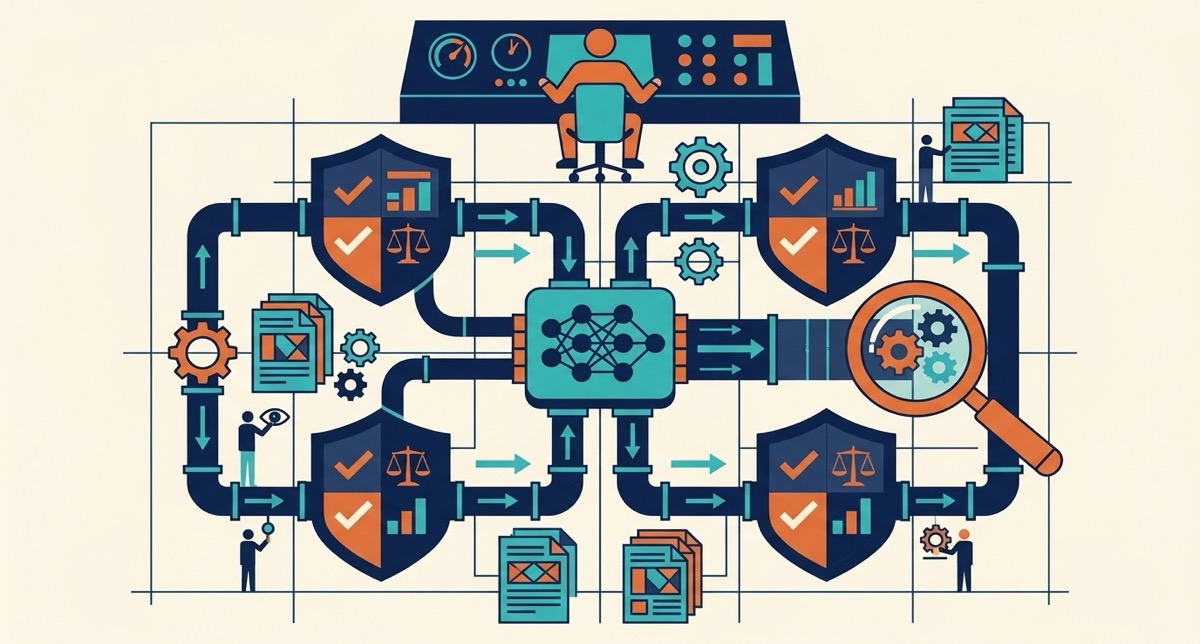

The Agent-to-Agent Architecture

The emerging pattern in wealth management is agent-to-agent: a general-purpose AI (the customer's assistant) interacts with a regulated AI agent (the firm's suitability engine). The FCA acknowledged this model in the Mills Review as having potential to support household financial optimisation.

The suitability implications are significant. The firm's agent must validate the data it receives from the customer's agent. It cannot assume that the customer's general-purpose AI has gathered accurate or complete financial data. The handoff between agents must include a data validation step, and the firm's agent must be prepared to request additional information if the incoming data is insufficient for a suitability assessment.

This architecture is the future of mass-market wealth management. The firms that design their suitability engines for agent-to-agent interaction now will have a structural advantage when this becomes the dominant channel.

*To discuss how the 90-Day AI Acceleration programme can help your firm design suitability-safe AI agents, contact the Value Institute.*